Sensing Infrastructure | NTU

Digital Twin Scanner

Hardware-synchronized multi-sensor platform for large-scale 3D reconstruction

Overview

Designed and built a hardware-synchronized multi-sensor scanning platform (LiDAR + IMU + 360° camera) with PPS time synchronization. The system produces high-fidelity 3D reconstructions — colored point clouds, textured meshes, and 3D Gaussian Splat scenes — for large-scale digital twin applications.

Key Achievement:Large-scale multi-sensor scanning platform producing photorealistic 3D reconstructions across three output modalities.

Scanner

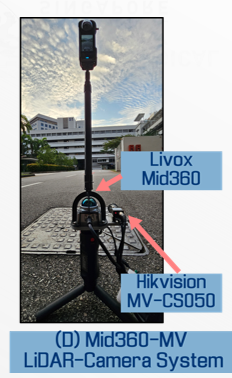

Gen 1 — Handheld LiDAR-Camera Scanner (Livox Mid-360 + Industrial Camera)

Gen 2 — Backpack Multi-Sensor Platform (LiDAR + IMU + 360° Camera + Compute Unit)

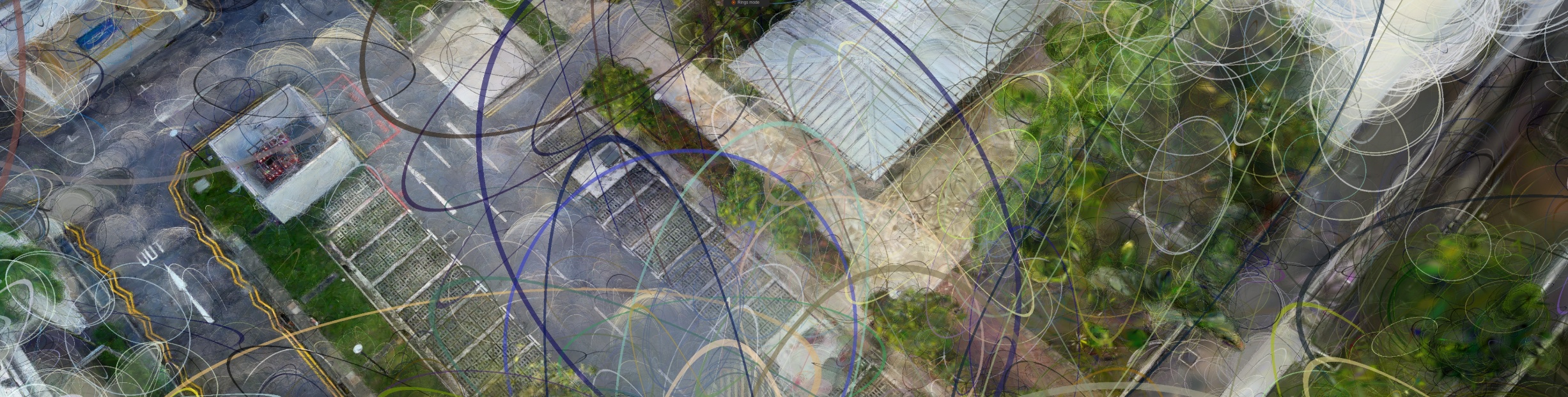

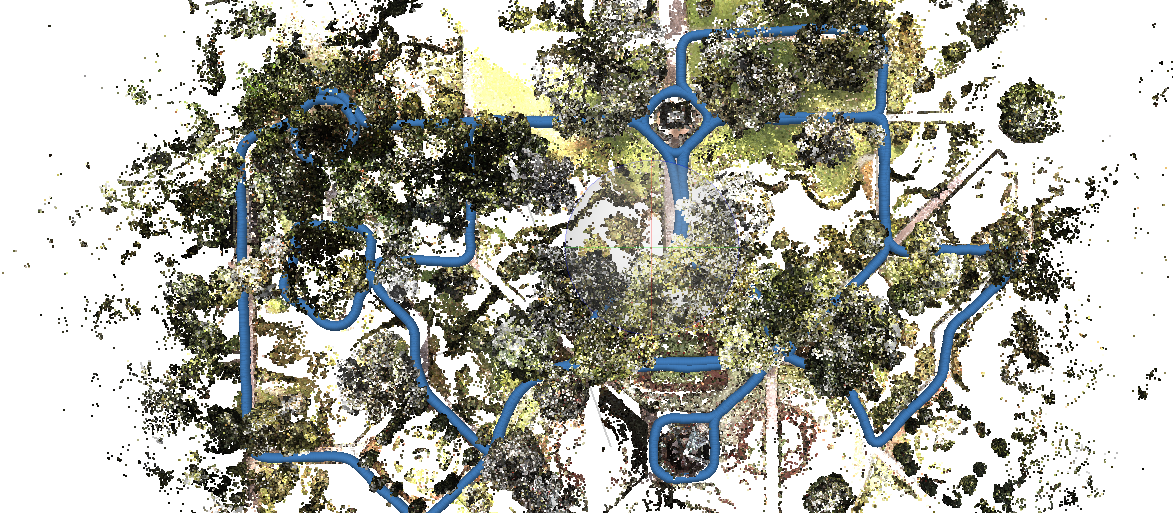

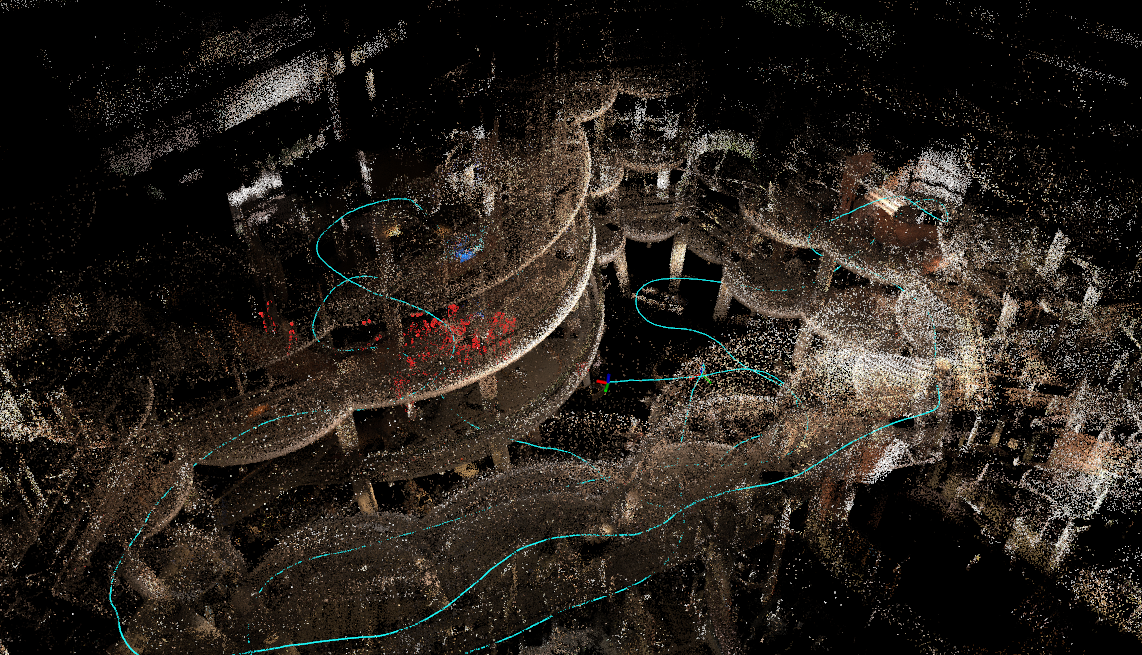

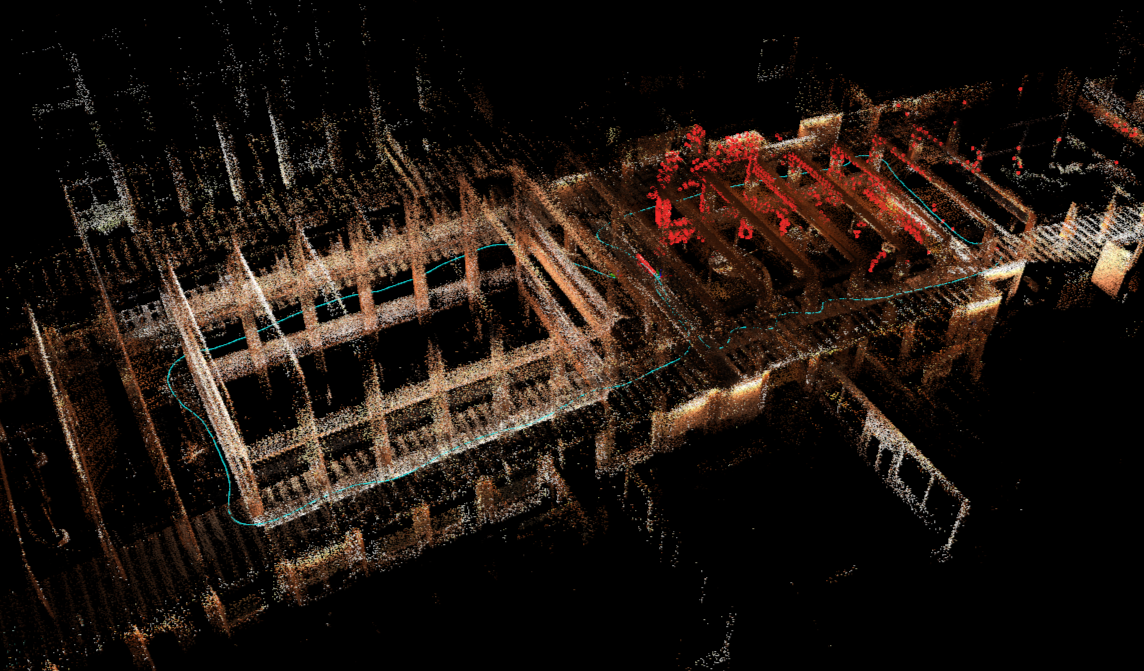

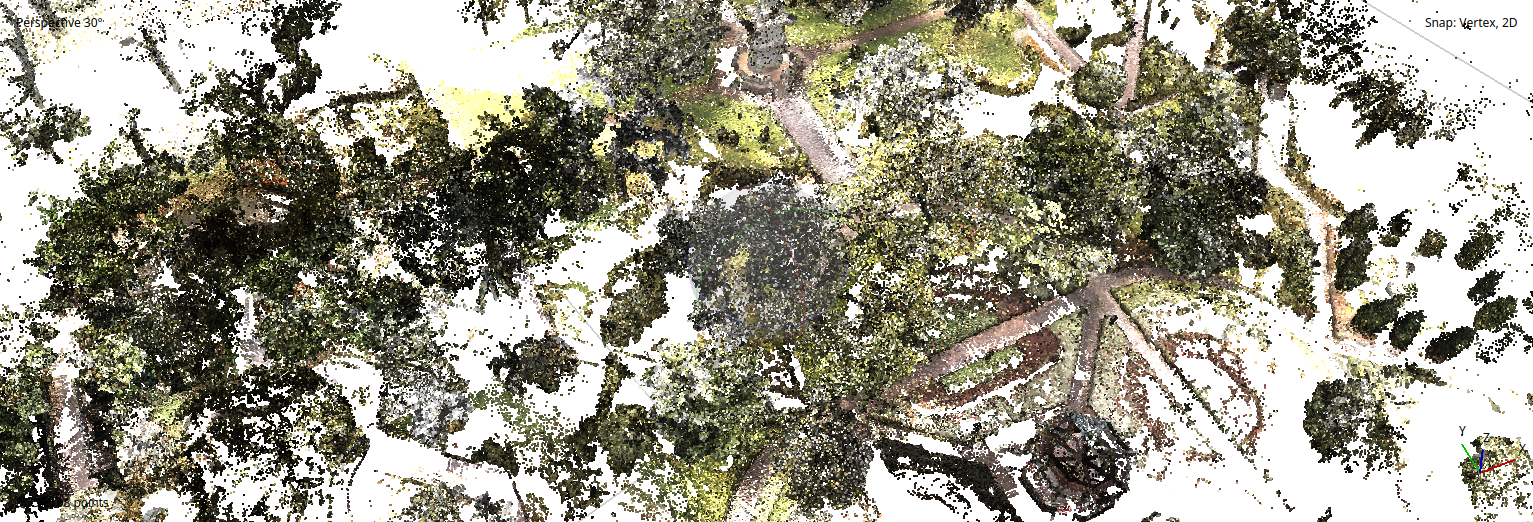

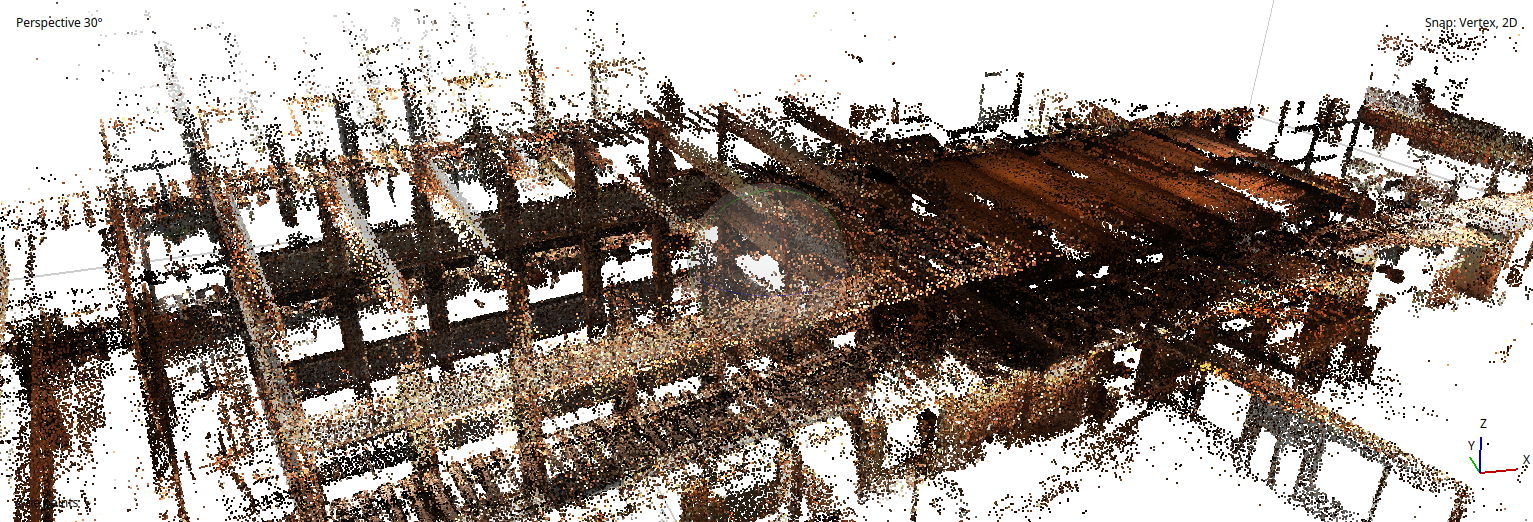

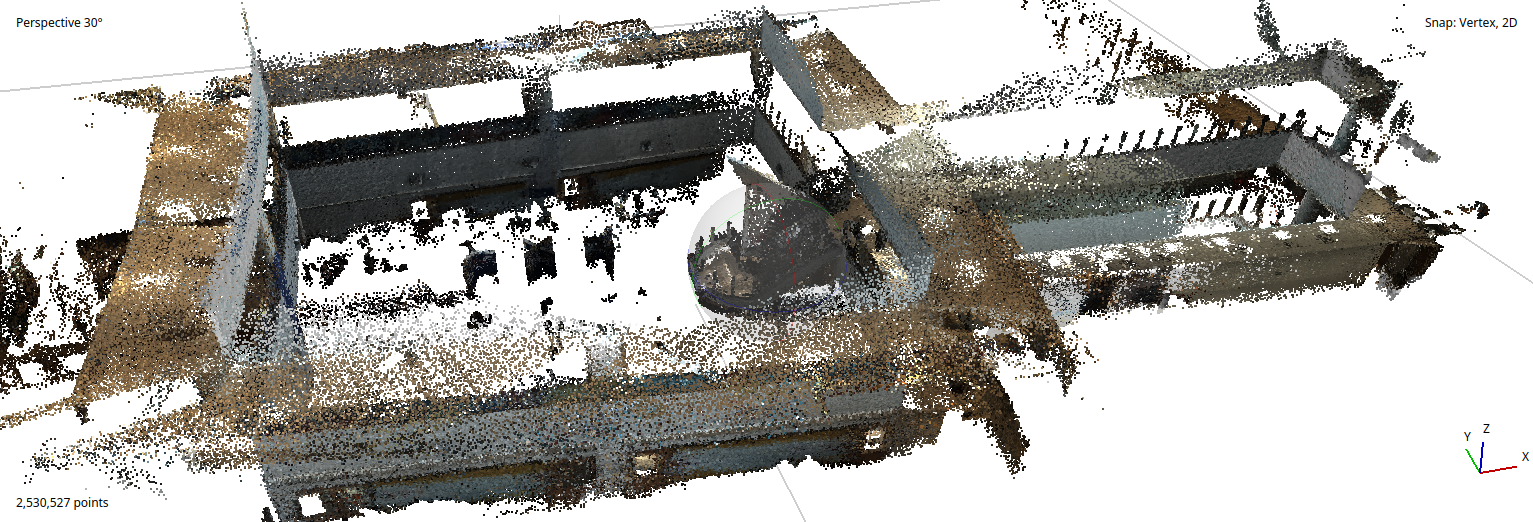

3D Point Cloud Reconstruction

Dense colored point clouds generated from LiDAR-inertial odometry fused with 360° imagery. Each scan captures millions of points with centimeter-level accuracy across campus-scale environments.

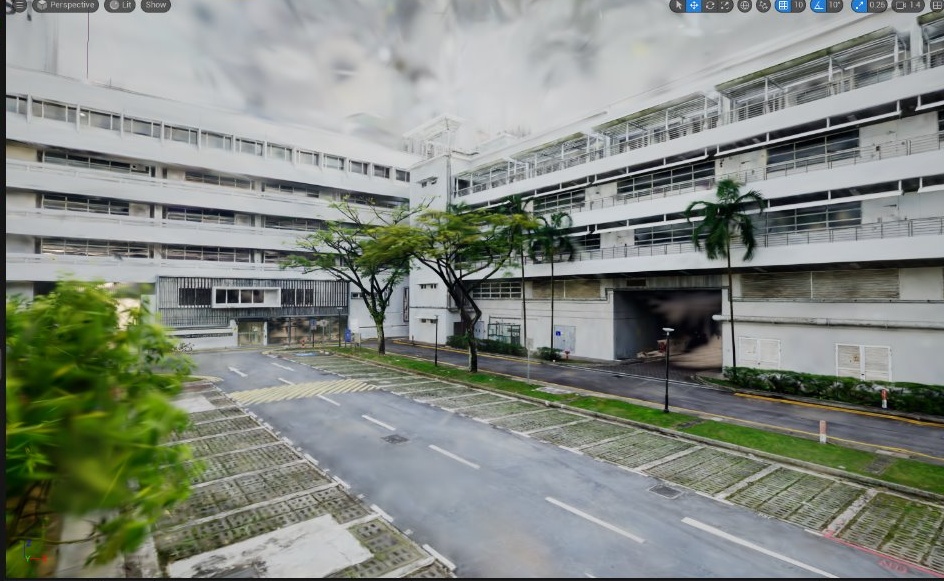

Textured Mesh Reconstruction

Watertight mesh models reconstructed from point clouds with texture mapping from camera imagery. Suitable for Unreal Engine integration and real-time simulation environments.

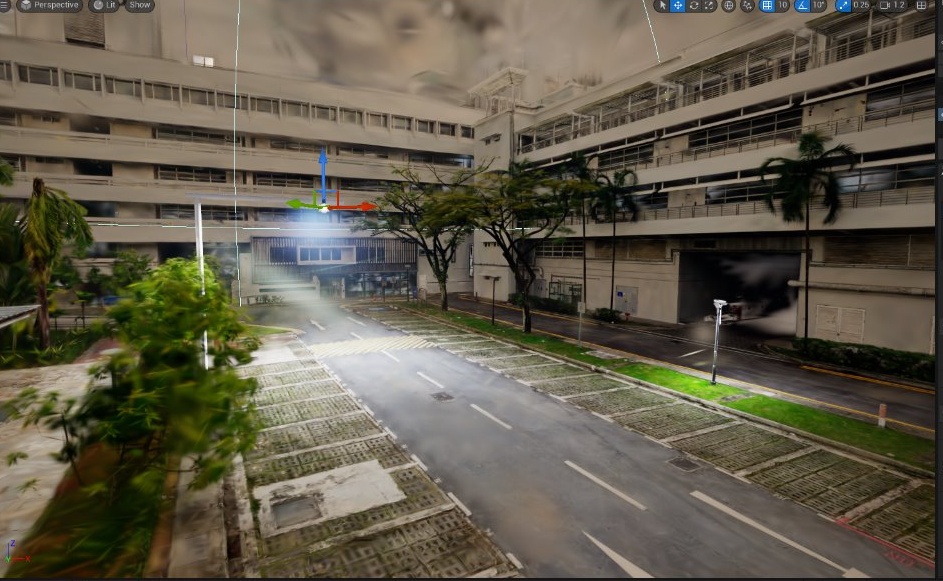

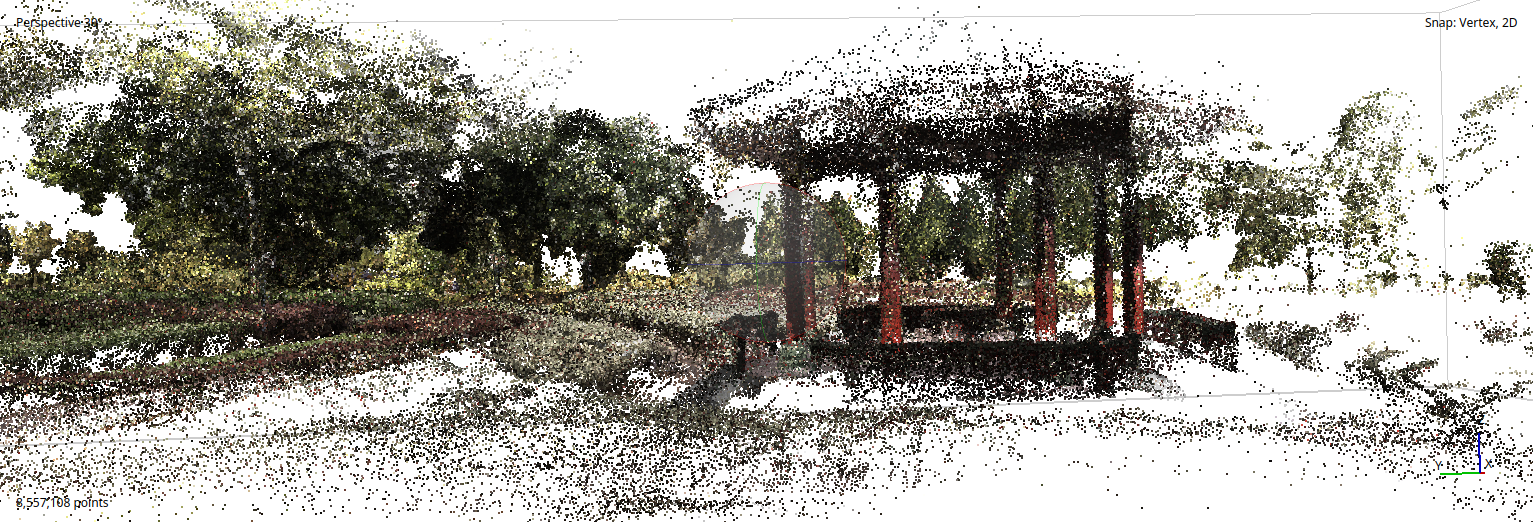

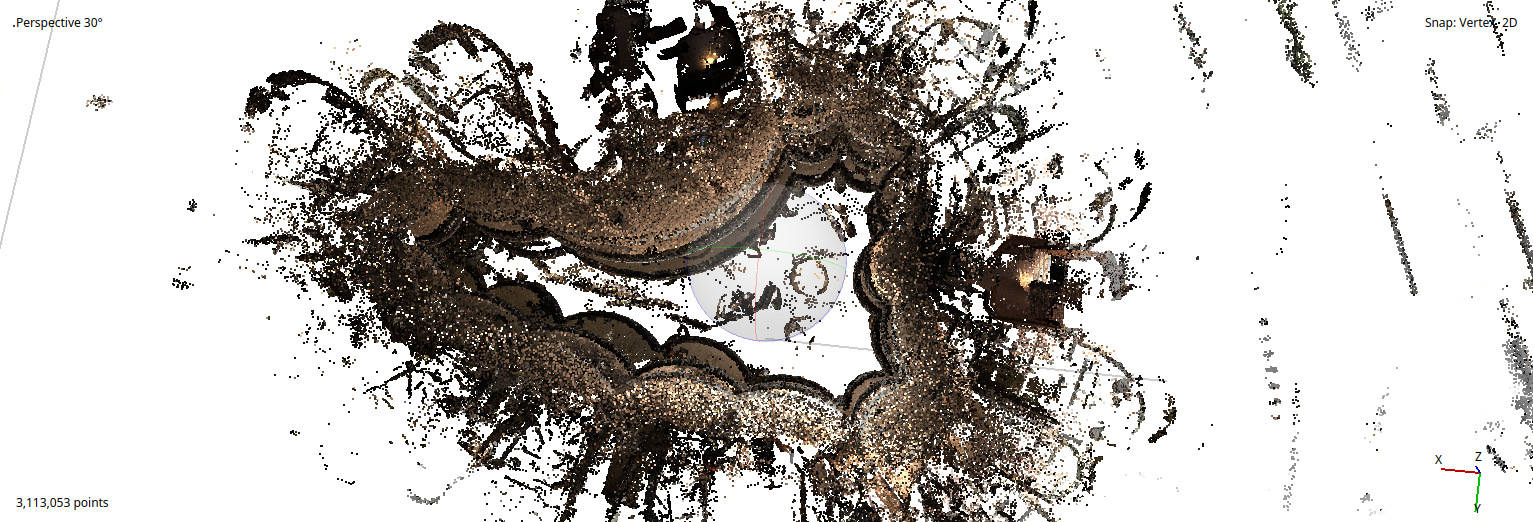

3D Gaussian Splatting

Novel view synthesis using 3D Gaussian Splatting trained on the captured multi-view imagery. Enables photorealistic real-time rendering of reconstructed scenes.