Research | NTU

Multi-Robot Intelligence

Multi-robot coordination, semantic mapping, and leader-follower formation — 3 journal + 5 conference papers

2019 – 2023

Overview

A 4-year research program that grew from a single question — how do cooperative robots build a shared semantic understanding of the world? The answer became a hierarchical probabilistic framework for collaborative semantic map fusion, which then branched into cross-robot relative localization, aerial-ground heterogeneous mapping, and multi-vehicle formation control.

Hierarchical Probabilistic Semantic Mapping Framework

T-Mech 2020 · ICRA 2020 · CIS-RAM 2019

EM-based hidden data association + Bayesian semantic-occupancy fusion

How do robots align their maps?

Cross-Robot Relative Localization

T-IE 2021 (COSEM) · IROS 2020

Joint semantic + geometric data association via EM

How do heterogeneous platforms fuse?

UAV-UGV Heterogeneous Collaborative Mapping

ICRA 2022

Information-gain trigger for multi-resolution hybrid fusion

From perception to coordination

Leader-Follower Platoon Cruise Control

T-ITS 2023

Perception-matching localization → planning → control

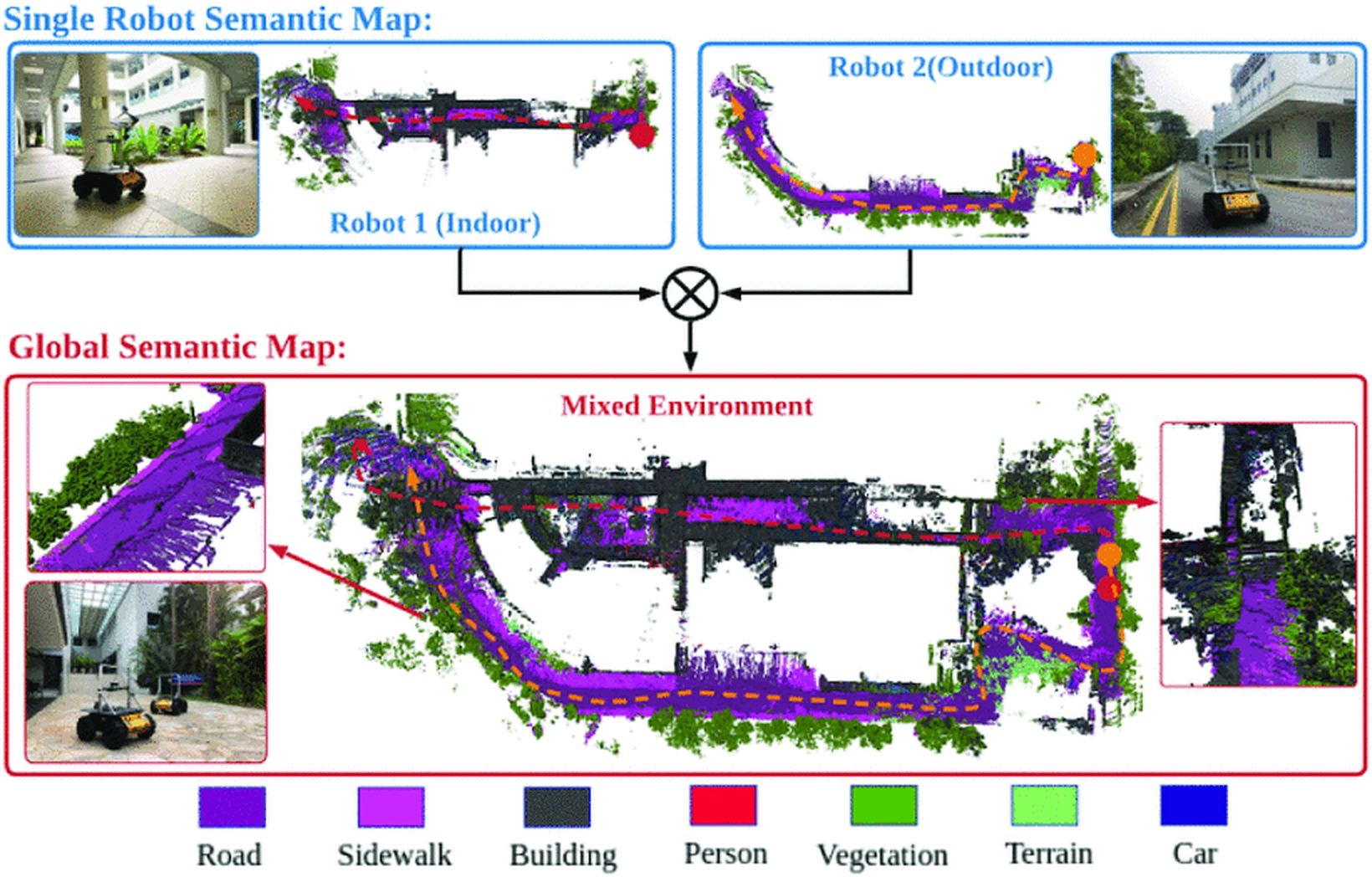

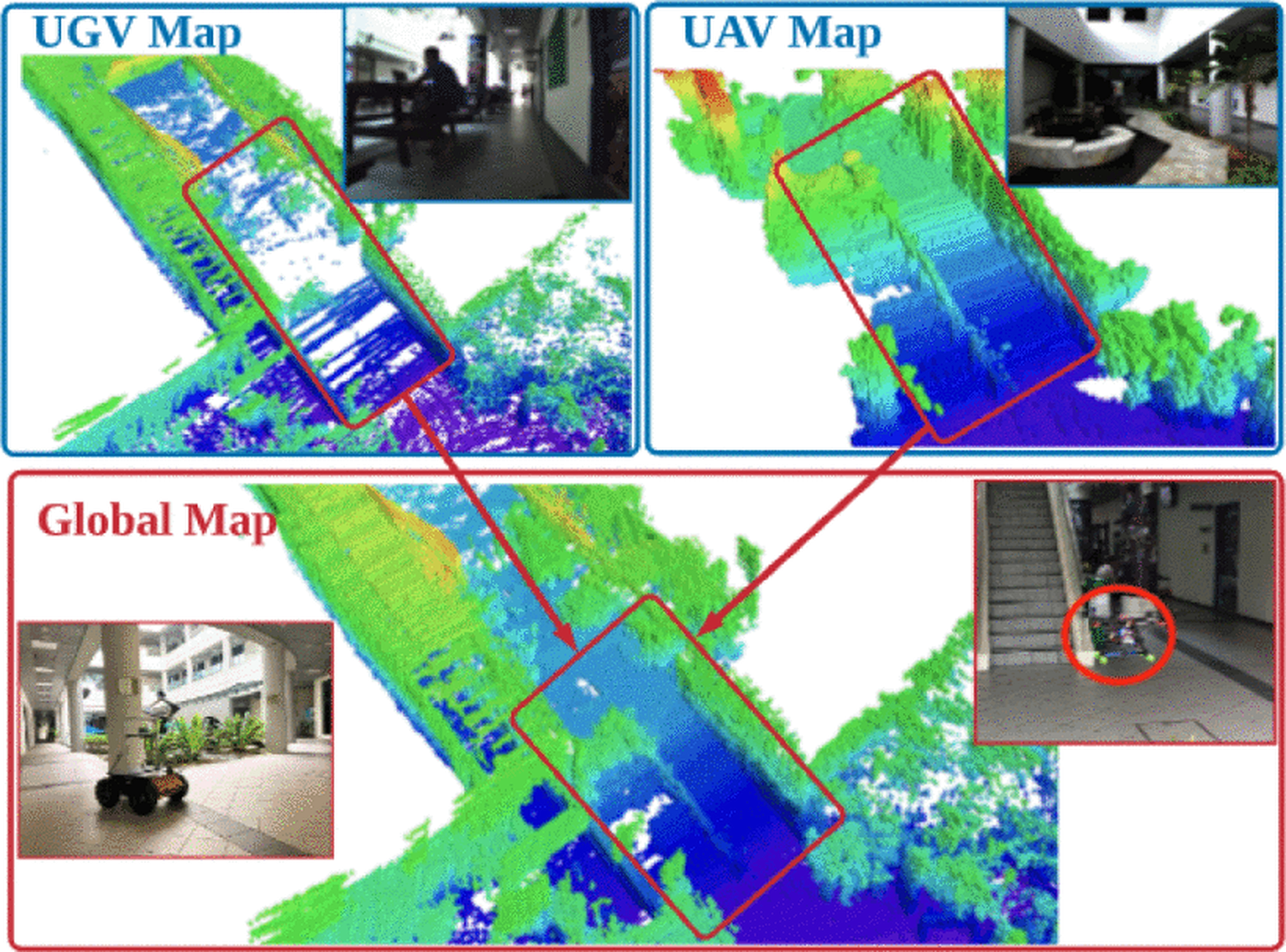

Two robots independently map indoor and outdoor environments, then fuse their semantic maps into a unified global representation

Hierarchical Probabilistic Semantic Mapping Framework

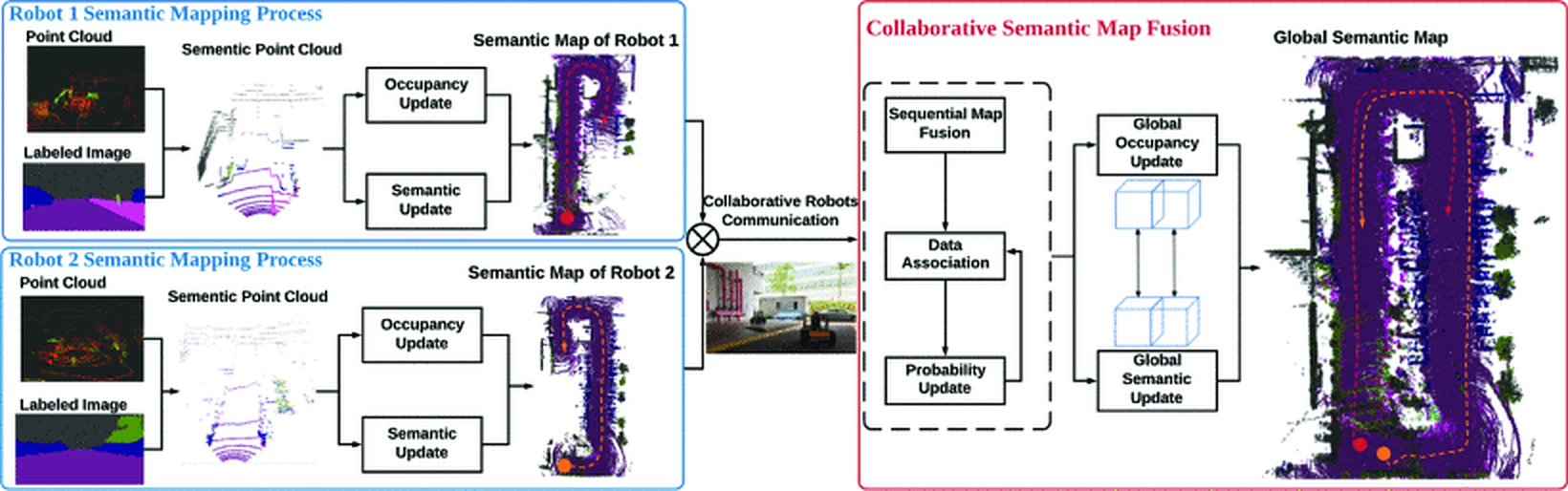

The theoretical foundation. The key contribution is the mathematical modeling of the hierarchical semantic map fusion framework and its probability decomposition. At the single-robot level, information from heterogeneous sensors is fused into a semantic point cloud, then converted into a local probabilistic semantic map via Bayesian label and occupancy updates. At the multi-robot level, since voxel correspondences between local maps are unknown, an Expectation-Maximization approach estimates the hidden data associations, and Bayesian rule merges semantic and occupancy probabilities into a globally consistent map.

Collaborative Semantic Understanding and Mapping Framework for Autonomous Systems

Yufeng Yue, Chunyang Zhao, Zhenyu Wu, Chule Yang, Yuanzhe Wang, Danwei Wang

A Hierarchical Framework for Collaborative Probabilistic Semantic Mapping

Yufeng Yue, Chunyang Zhao, Ruilin Li, Chule Yang, Jun Zhang, Mingxing Wen, Yuanzhe Wang, Danwei Wang

Probabilistic 3D Semantic Map Fusion Based on Bayesian Rule

Yufeng Yue, Ruilin Li, Chunyang Zhao, Chule Yang, Jun Zhang, Mingxing Wen, Guohao Peng, Zhenyu Wu, Danwei Wang

Two-level pipeline: per-robot heterogeneous sensor fusion → semantic point cloud → EM-based collaborative map fusion → global semantic map

Semantic voxel maps: each voxel carries a probabilistic class label (road, building, vegetation, terrain)

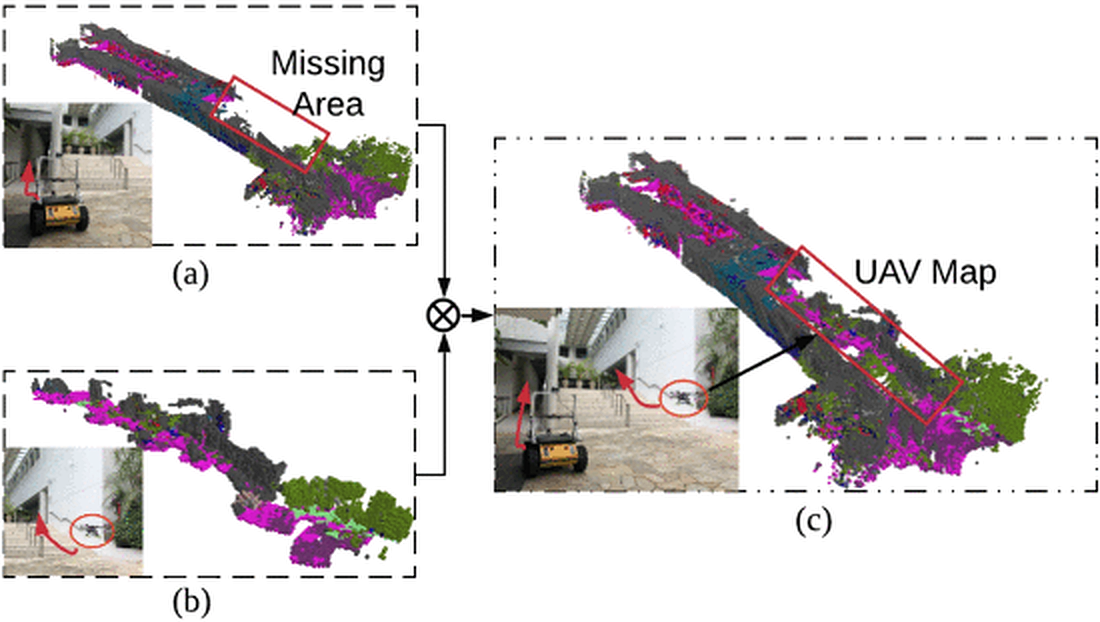

Distributed fusion: UAV and UGV maps are merged — the combined map is more complete than either alone

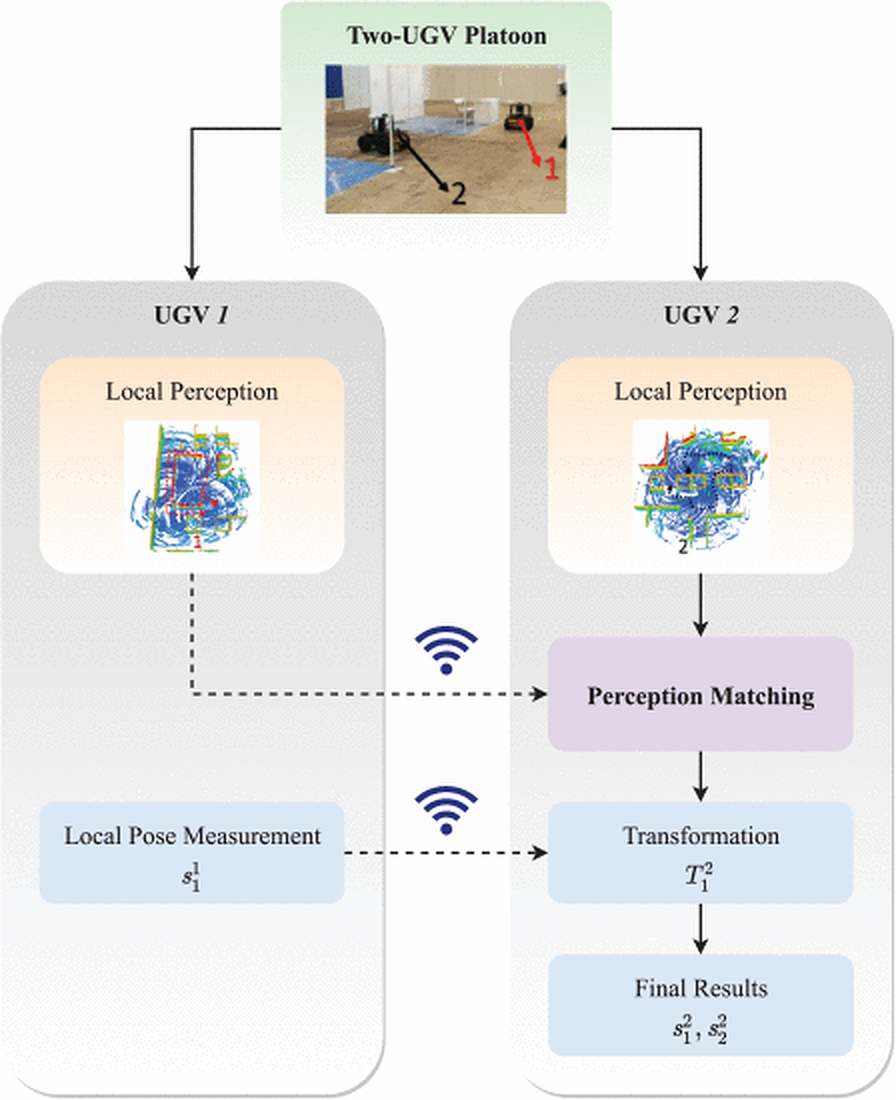

Cross-Robot Relative Localization via Semantic Map Matching

A prerequisite for map fusion: determining the relative pose between robots that share no common reference frame. Existing approaches rely on low-level geometry features (points, lines, planes), which fail when initial error is large or overlap is low. COSEM jointly performs multimodal information fusion, semantic data association, and optimization in a unified framework. Instead of hard geometry data association, semantic and geometry associations are jointly estimated via Expectation-Maximization. The result is a rigid transformation matrix that aligns two semantic maps.

COSEM: Collaborative Semantic Map Matching Framework for Autonomous Robots

Yufeng Yue, Mingxing Wen, Chunyang Zhao, Yuanzhe Wang, Danwei Wang

Collaborative Semantic Perception and Relative Localization Based on Map Matching

Yufeng Yue, Chunyang Zhao, Mingxing Wen, Zhenyu Wu, Danwei Wang

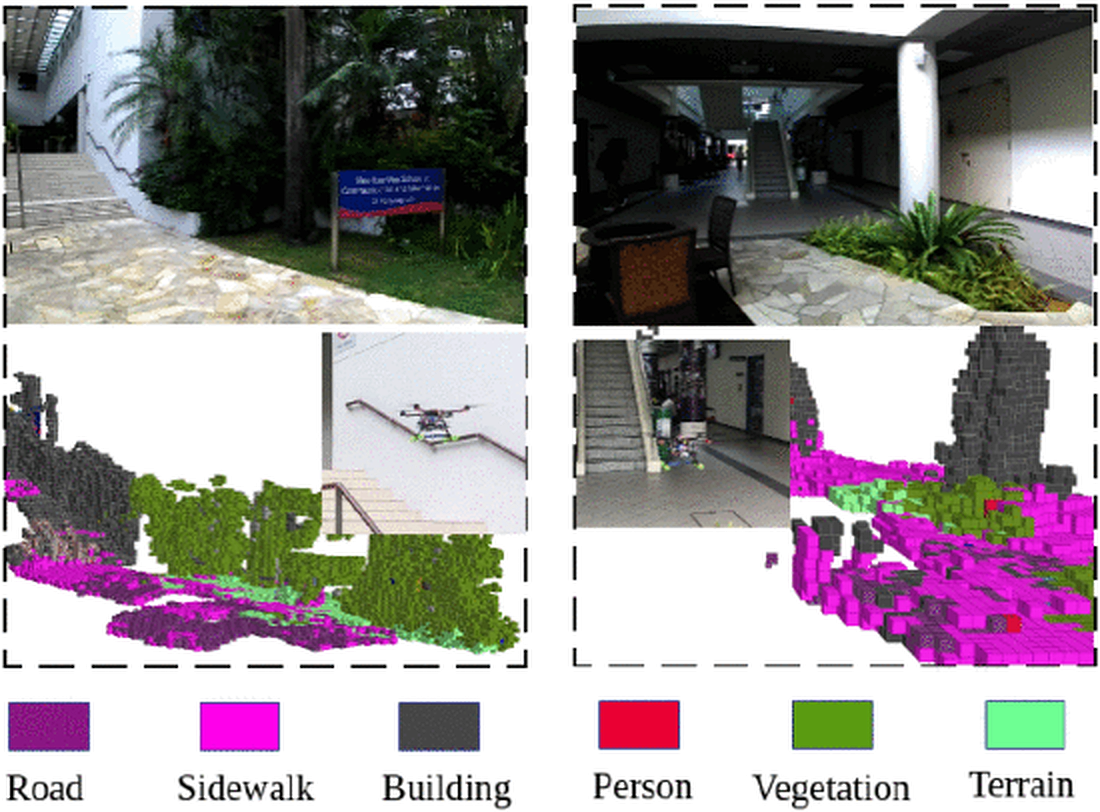

UAV-UGV Collaborative Mapping in GNSS-Denied Environments

Collaborative heterogeneous robots face a fundamental challenge: UAVs and UGVs differ hugely in perception, mobility, and processing capabilities. This work formulates the UAV-UGV collaborative mapping problem with a continuous-discrete model. The key contribution is an information-gain trigger mechanism that determines when each robot should project its continuous local estimates into discrete space for multi-robot map fusion — solving the critical question of when to fuse, not just how. In discrete space, a probabilistic fusion algorithm based on Bayesian rule addresses multi-resolution hybrid map fusion between aerial and ground observations. Validated in GNSS-denied scenarios including tropical rainforest and multi-level buildings.

Aerial-Ground Robots Collaborative 3D Mapping in GNSS-Denied Environments

Yufeng Yue, Chunyang Zhao, Yuanzhe Wang, Yezhou Yang, Danwei Wang

Rainforest: UAV captures canopy, UGV captures ground-level structure — fused 3D map covers both

Two-level building: UGV maps ground floor, UAV maps upper level — merged into a multi-floor reconstruction

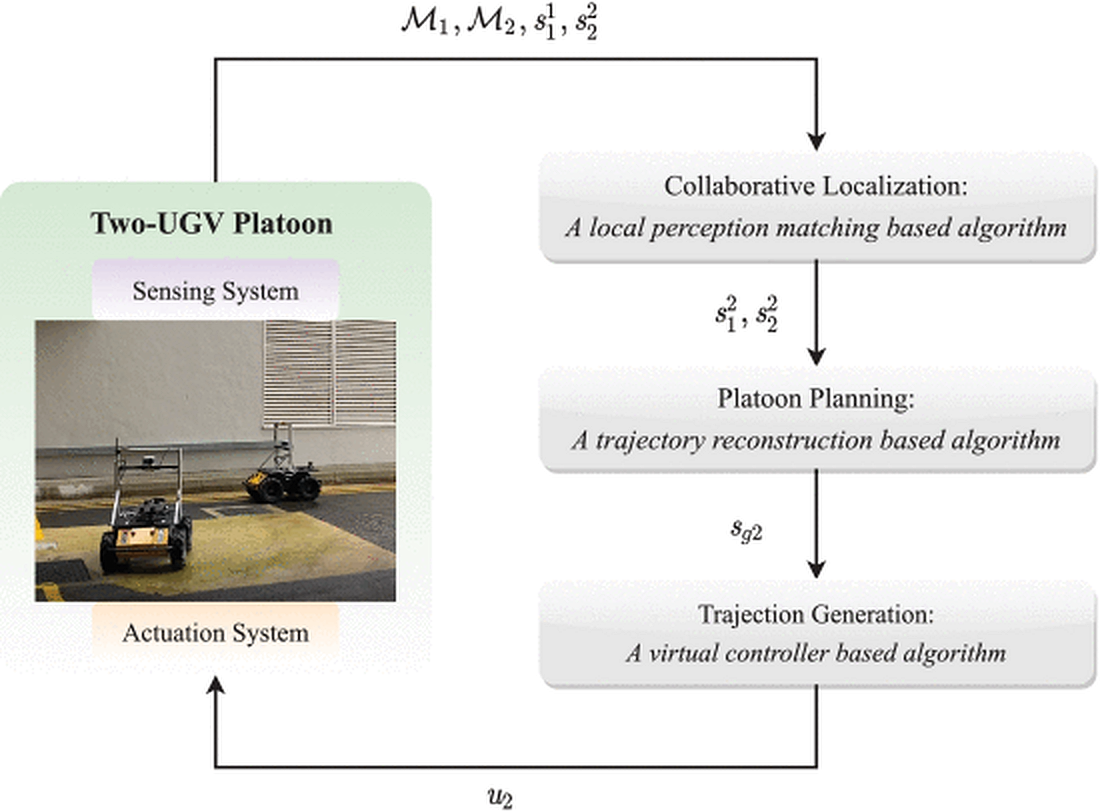

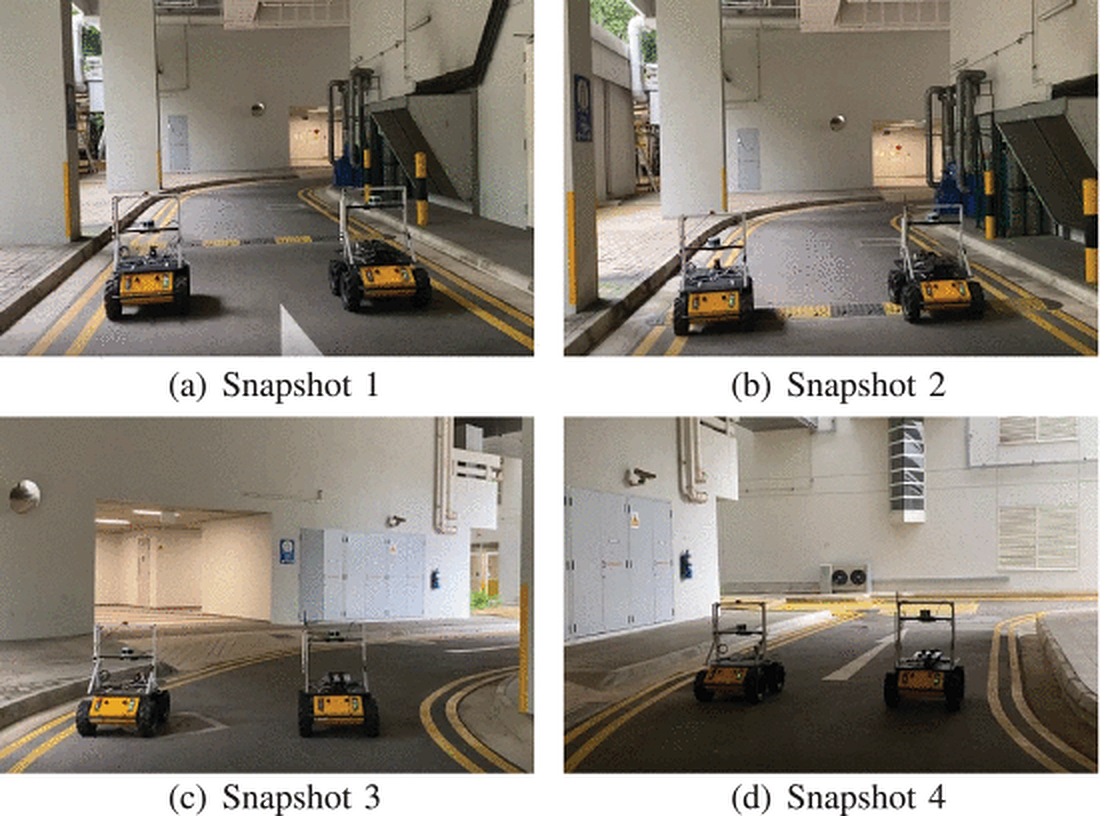

Leader-Follower Platoon Cruise Control

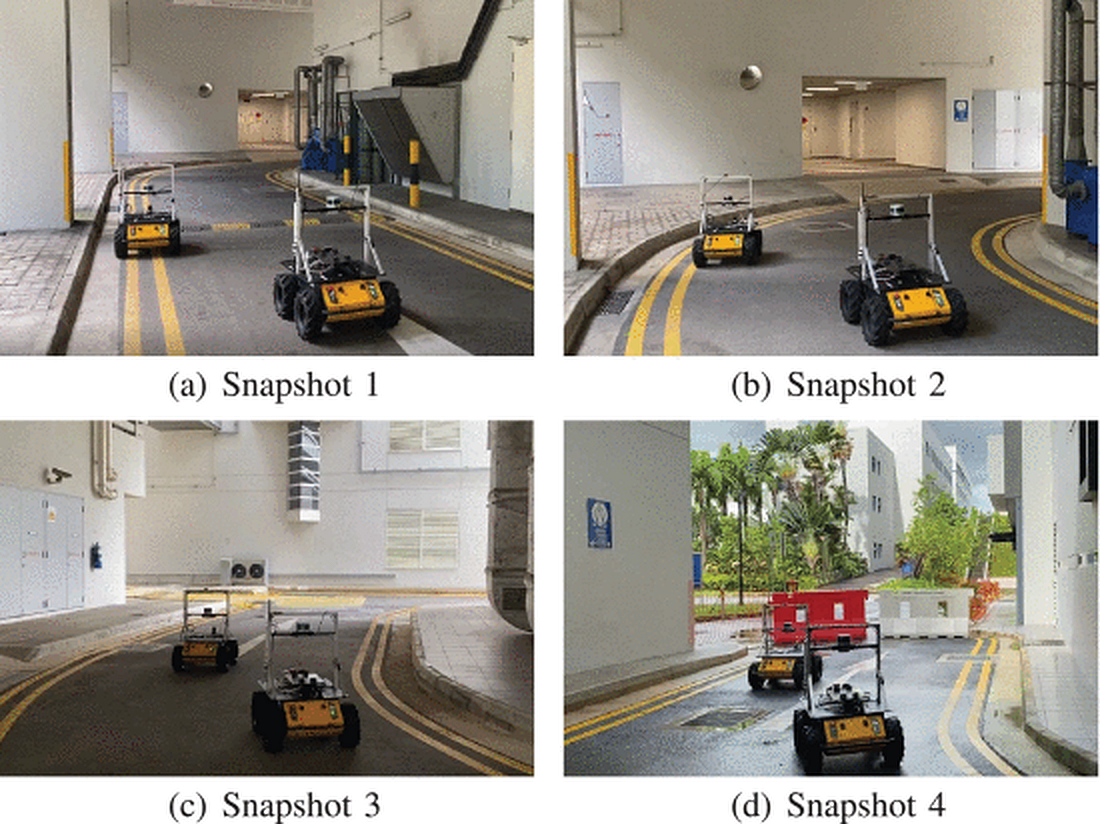

Extends collaborative perception into coordinated multi-vehicle action. The problem: UGV platoon cruise control in environments without positioning infrastructure — no lane markings, no roadside units, no GNSS. An integrated localization and planning framework composed of three modular algorithms: collaborative localization through matching local perceptions of different vehicles, trajectory reconstruction of the preceding vehicle to plan the follower's target state, and a virtual-controller that generates feasible trajectories in real time. The framework does not depend on direct observations between vehicles, making it applicable in non-line-of-sight situations.

Integrated Localization and Planning for Cruise Control of UGV Platoons in Infrastructure-Free Environments

Yuanzhe Wang, Chunyang Zhao, Jiahao Liang, Mingxing Wen, Yufeng Yue, Danwei Wang

Three modular algorithms: collaborative localization → platoon planning → trajectory generation

Collaborative localization: matching local perceptions between vehicles without external infrastructure

Corridor following: UGV platoon maintains formation through structured environments

Indoor-to-outdoor transition: formation holds across environment changes and curved paths